Installing Linux on an old Thinkpad is “middle aged dad decides to get fit by doing toe touches in his boxers” except you don’t get disgusted & give up. Instead you blog about how awesome it is until it’s not and then you stop blogging for six months in hopes everyone forgets.

Well, it thinks I’d probably read my own blog, anyhow.

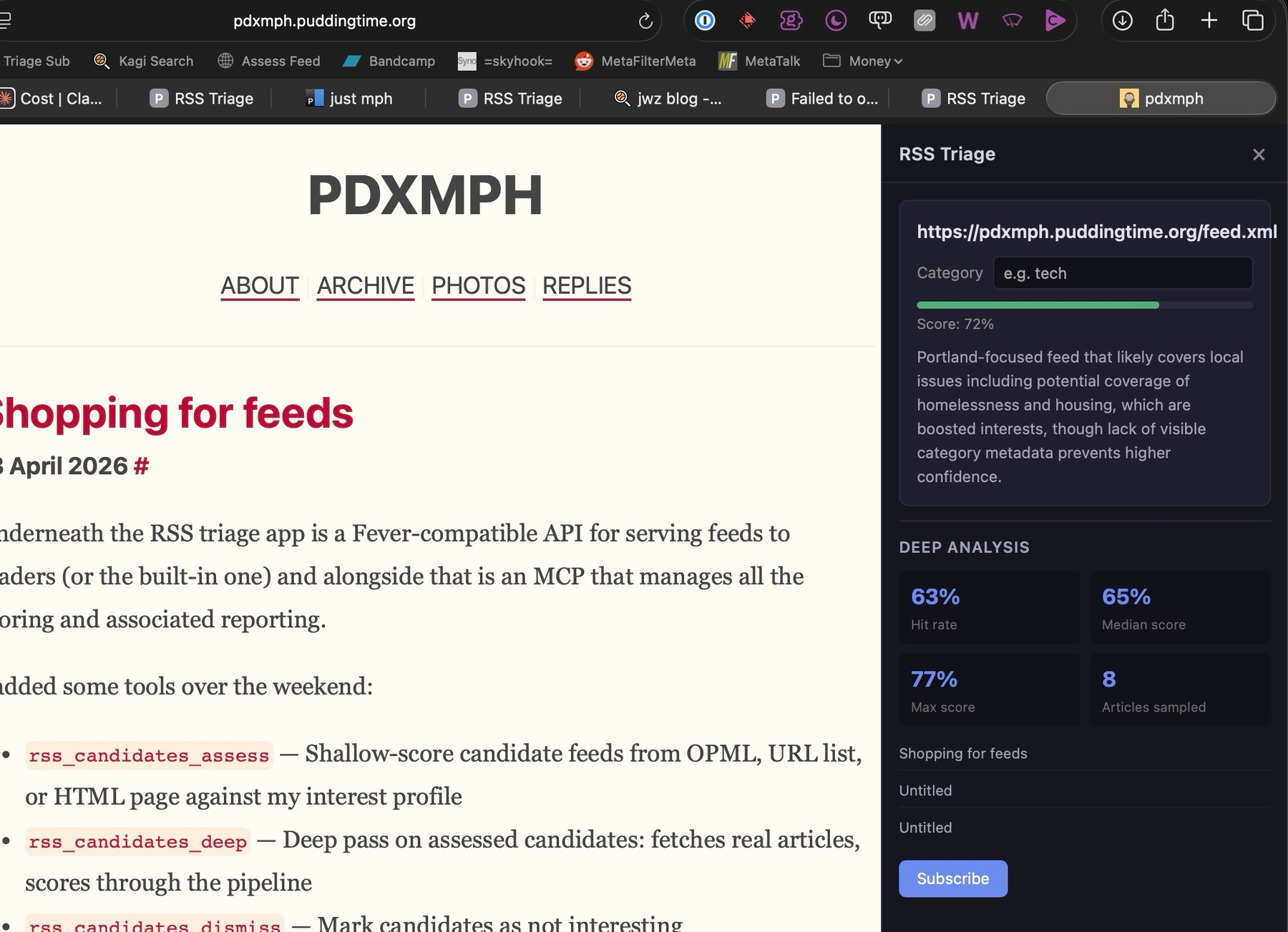

Shopping for feeds

#Underneath the RSS triage app is a Fever-compatible API for serving feeds to readers (or the built-in one) and alongside that is an MCP that manages all the scoring and associated reporting.

I added some tools over the weekend:

rss_candidates_assess— Shallow-score candidate feeds from OPML, URL list, or HTML page against my interest profilerss_candidates_deep— Deep pass on assessed candidates: fetches real articles, scores through the pipelinerss_candidates_dismiss— Mark candidates as not interestingrss_candidates_subscribe— Promote candidates to real subscriptions

I’ve been grabbing OPML from sites like ooh.directory and dragging them into Claude Desktop where the MCP does a shallow pass against headlines, suggests some likely candidates, then does a deep pass on the stronger ones by pulling actual articles.

I found some pretty interesting management blogs this way, and it’s helpful for sifting through long, general lists. Because everything hits the scoring queue and triage is easy, it feels less risky to just grab a bunch on their speculative quality and know they’ll get sorted out after a little while.

I also built an endpoint to feed a URL in via a bookmarklet (or eventual browser extension), so the traditional “subscribe to this site” bookmarklet gets a makeover by using the scoring pipeline to offer a quick summary before categorizing and subscribing.

I dunno … it seems to be encouraging me to subscribe to stuff, and my reading list is diversifying. Seems good.

… then a run through impeccable to help me get it to better information density and less monotony.

I guess the elevator pitch has become “Google Reader, except it learns from what you skip and star and lets you make a killfile and whatever the opposite of a killfile would be that also uses inference to let you be kind of loose with that.”

One frustration and one unexpected insight from the RSS service

#I spent more time working on rss-triage this weekend. One thing was frustrating but ultimately helpful, and one thing was a great outcome I didn’t think about going in.

The frustrating thing was having to abandon “did I read this” as a scoring signal. It seems like a good idea to include it, but:

First, feed readers are pretty annoying if you’re trying to measure read state, because it’d be a complex problem to solve for very little payoff unless your reader’s whole project is “help people figure out what’s worth reading,” and almost none of them do make that their project. There’s been this little ripple through the “people who like RSS” world calling out the stale state of RSS reading (“they all look like mail readers”) and none of the “looks like a mail reader” development community seems to have thought “what would the built-in spam filtering of a mail reader look like in an RSS reader?” They just think “did you glance at it? I’ll pass that back to the feed backend so you don’t see it a second time on another client somewhere.”

Readwise Reader kind of does solve this – it tracks how far down an article you’ve scrolled. That could be a great signal but then you’re using Readwise Reader.

Second, I end up reading things in other places: From Linkding, from Linkwarden, in Wallabag, just opening the link in a browser. They mostly have some variant on “read” or “unread,” but they generally require a manual toggle. So tracking what I read and didn’t requires manual interventions.

So with some disappointment I yanked was_read out of the feed scoring pipeline, and I’m down to “did I star it, did I skip it, or did a rule I created block it?” Those are all at least clear behavioral signal that an be combined to suggest the future success of a given feed from past behavior. was_read would have been, too, but I’m not ready to create an entire RSS reader of my own to give myself more guarantees.

And I still have the inference-driven stuff to help auto-triage: Given much firmer behavioral measures, the inference layer is getting stronger signal about the reputational thumb it should put on the article scoring scale. It is also doing a good job with my more subjective, inference-friendly nudges: Project version bumps, crime stories, headlines that make it hard to figure out the subject, etc. all get downweighted.

Finally, I have the topic surge filtering layer: Work requires me, for instance, to keep up with the latest in Gemini. It doesn’t require me to read 20 separate press release rewrites from my Google News search feed.

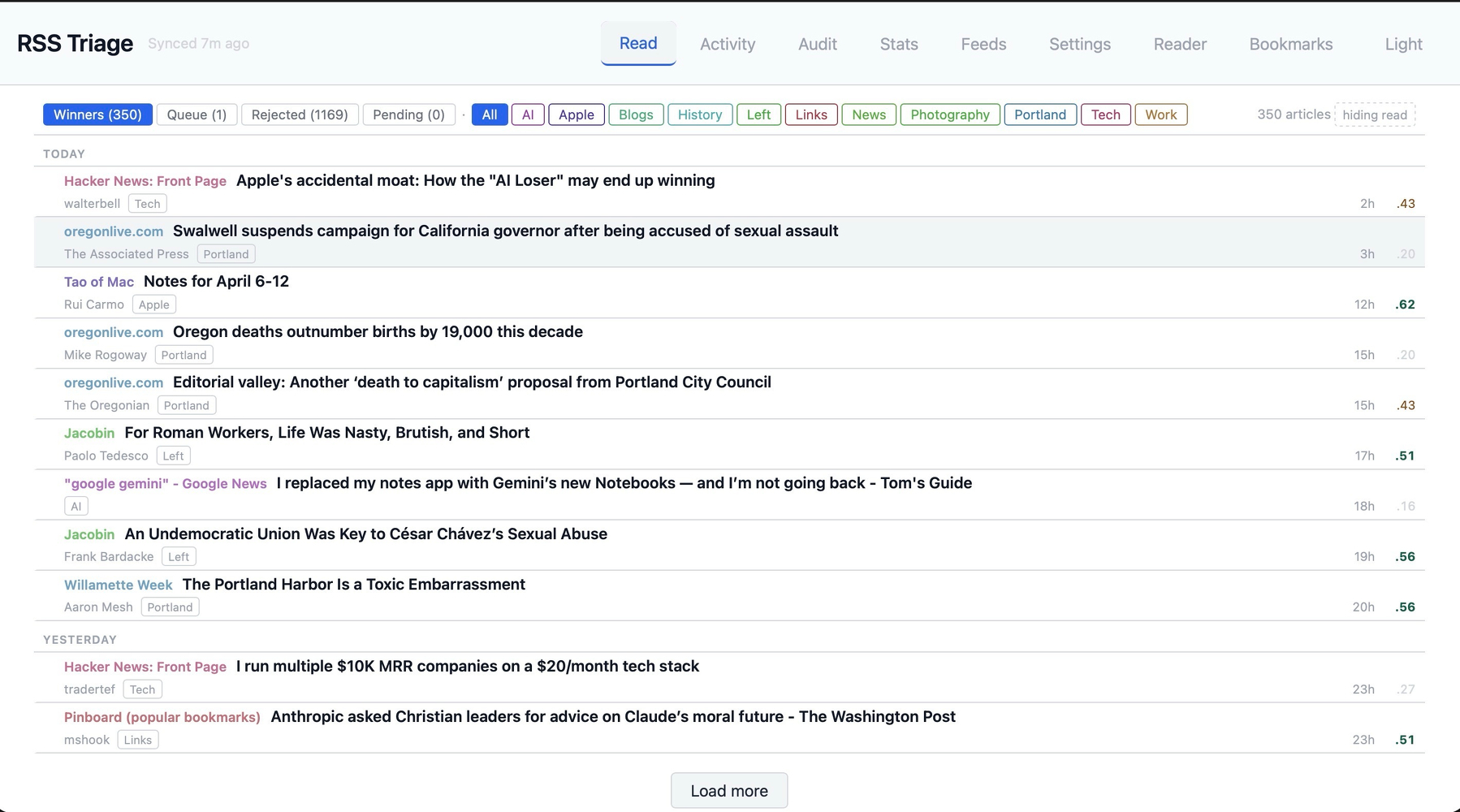

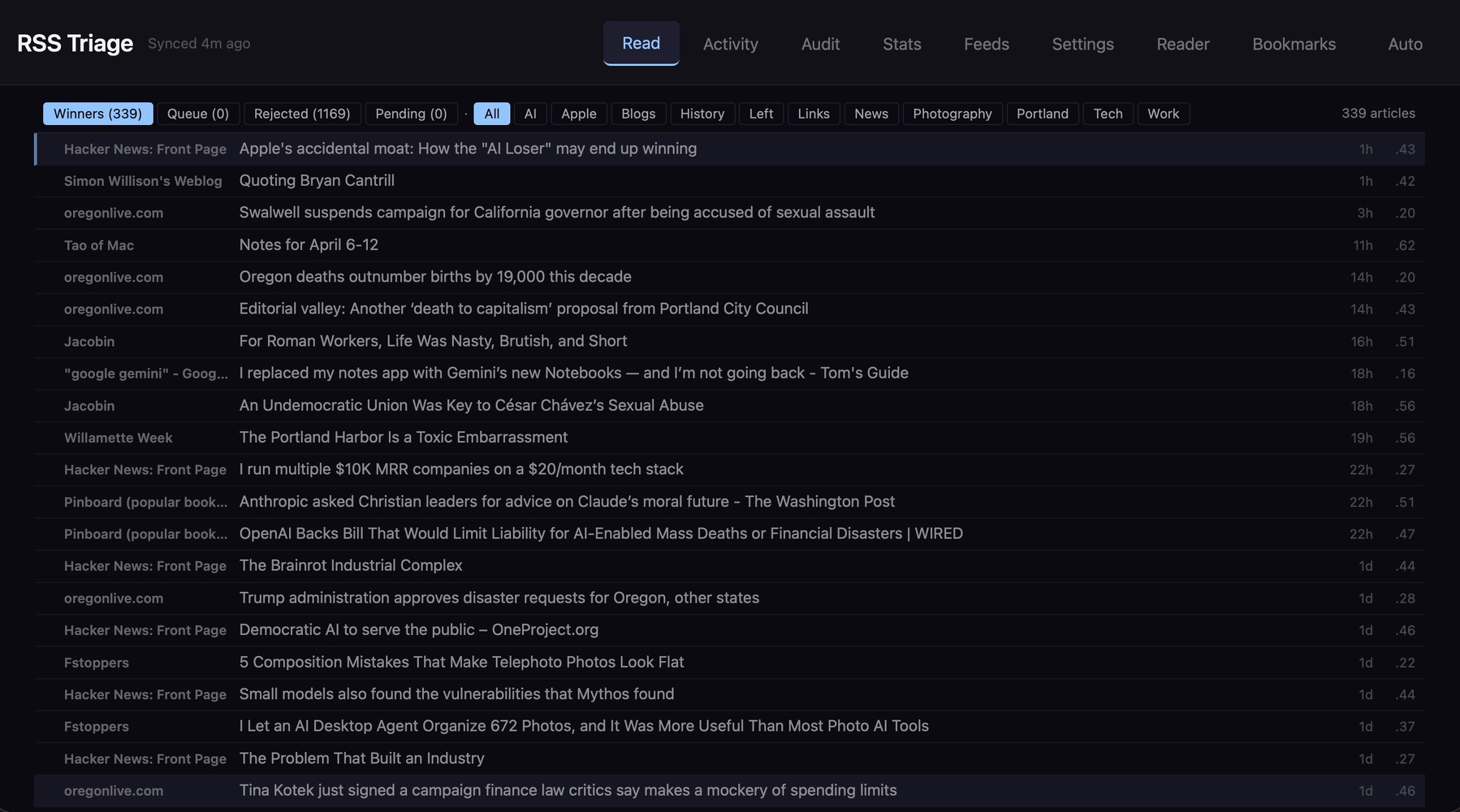

So … is it working?

Yeah, I would say it is. I’ve got a few tabs in the UI that let me go in and see what inference has ruled out, and I am disagreeing with it less and less. The past few days I just scroll down the reject pile and hit the big red “dismiss them all” button instead of overriding any of them.

I’ve been cautious about letting it recommend, though. As eager as I am to filter out dead wood (anything scoring below 3/10) I won’t let it promote anything below 7/10, and it hasn’t suggested much yet. Makes sense. I’ve spent more time telling it what I don’t like than what I do like, so there’s not much for inference to dig in on to start filling in the high end of curve.

Oh, right. So the unexpected part:

Nothing that unpleasant, really. As I’ve been working on the pipeline tuning parameters, I’ve been in a validation cycle of “tweak -> rescore -> review.”

The tool gives me a categorized list of all my feeds along with an attention score for each, based on how many articles from that source that the system has seen, how many I have starred, how many I have skipped, some recency calculations, and a Bayes smoother that takes its time judging lower volume feeds but gets more opinionated with higher volume ones faster. To pick on The Oregonian again:

- 765 articles seen in the 14-day window

- 307 dismissed (which means 458 hit a filter)

- 41 starred (deemed interesting) for a 5% “star rate.”

- 24 attention score

… or Hacker News:

- 414 seen

- 326 dismissed (88 hit a filter)

- 51 starred, for a 12% star rate

- 29 attention score

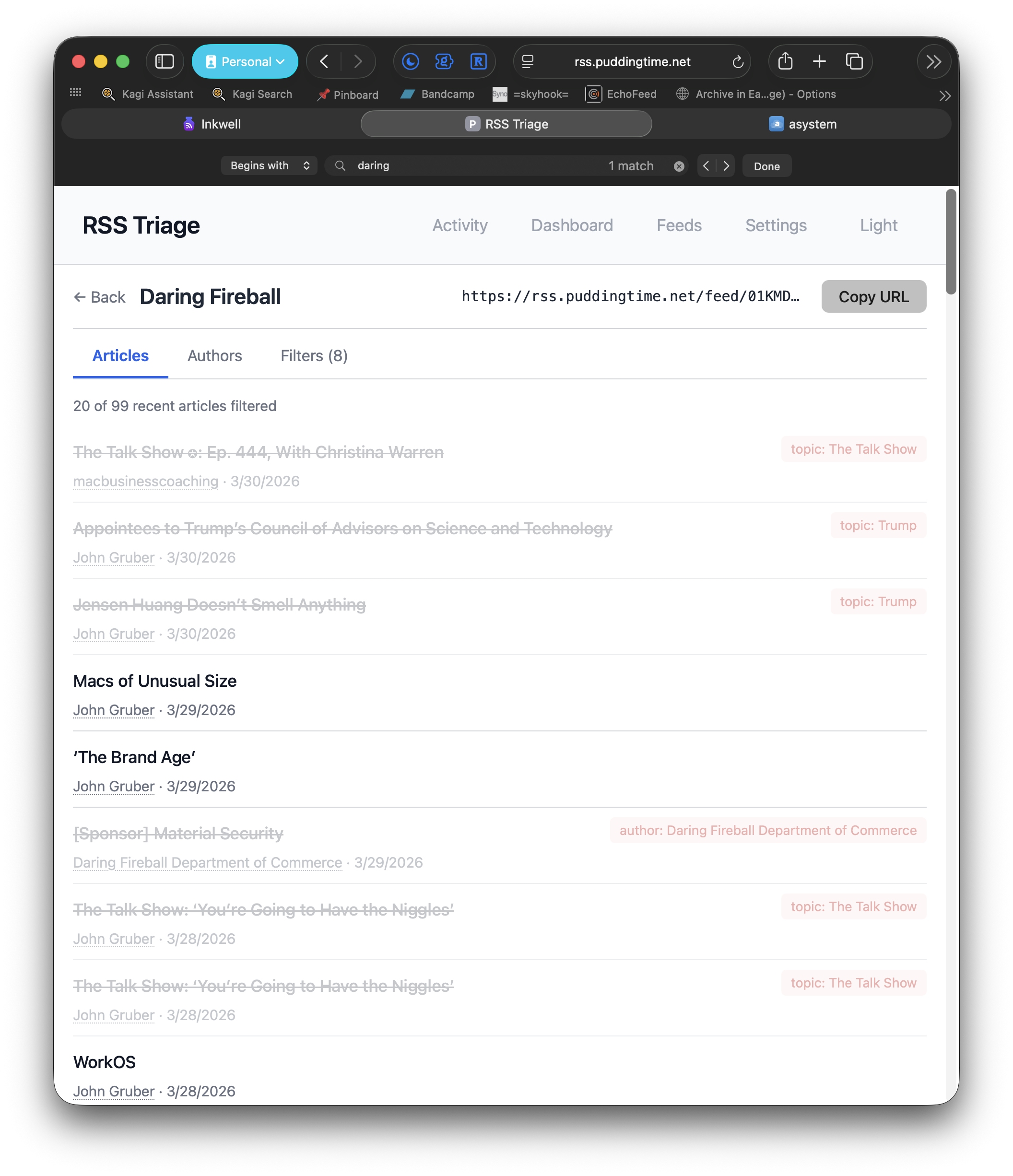

… or Daring Fireball

- 82 seen

- 67 dismissed

- 5 starred, for a 6% star rate

- 33 attention score

… or Jacobin:

- 78 seen

- 46 dismissed

- 30 starred, for a 38% star rate

- 52 attention score

I’ve made it easy to get those numbers because I’m still tuning the pipeline and figuring out the assorted weights, but ideal steady state is “I have no idea what those numbers are any more, I just know I see a lot of good things and far fewer bad things; and also I argue with the inference layer less in review.”

Anyhow … what is interesting to me is that I have 60 feeds running through this system, and by introspecting this system a lot, I’ve been forced to see how much of them I actually care to read vs. what I don’t. That has caused me to realize that a lot of my feed list is probably either coming from habit or aspiration, not active interest. Always worth sitting with.

The Brompton is a pleasant surprise and I'm keeping it

#I took my Brompton C-Line on the train to Eugene. I used it to bike to the Max station, then rode to Union Station, then checked it with Amtrak and picked it up in Eugene and rode it to my hotel.

I could have just taken it as a carry on, but I haven’t ridden Amtrak in a while and forgot how storage space works in the compartment. My most recent rail experience was in France, and the train ride from Lyon to Paris involved some genuine chaos in the baggage area, so that was the memory I could summon and I didn’t like it. I think I could have stuck it in the overhead bin on the Amtrak, and if there’d been no space I could have left it at the end of the car. Anyhow, it was $5 to just hand it off, and next time I’ll remember.

For as many bikes as I’ve owned, I don’t really know much about them. I couldn’t predict, based on specs around gearing and geometry, how a bike will really feel. I will say that of all the bikes I’ve owned over the years, the two that have felt the very best are my Brompton and a Trek Crossrip (one of the ones with carbon forks).

What is “best”?

I’d say it’s a combination of easy to understand stuff in the form of my riding posture and how easy it is to feel like I have good situational awareness without paying in a sense of balance, and something tangible but hard to explain in the form of I dunno what I’d call it … time to cruising speed? Smoothness to cruising speed?

I guess it’s just a gearing thing. The Crossrip and the Brompton both felt/feel easy to get up to a speed where I feel like I’m cruising at a satisfying speed and then not pedaling too hard or too fast to stay there.

It doesn’t surprise me that the Crossrip felt that way. It seems to be a well regarded bike whose departure from the lineup is lamented. It does surprise me a bit with the Brompton, given its tiny wheels and foldability. I’ve had other, less expensive folders and you feel the tradeoffs in the ride, and even with 20" wheels they don’t have that sense of smooth ramp and balanced control.

When I bought the Brompton I loved the test ride and was surprised at how good it felt, but I was pretty sure it wouldn’t feel good for a ride all the way downtown and back, which is 10 miles if I take the long way along the Springwater and more like 7 or 8 if I cut through inner southeast. I imagined it as a good bike for stuff inside five miles: Errand runs into Woodstock, maybe trips to Sellwood, but mostly as a last-couple-of-miler from the Max, or a one-way “meet Al for drinks after work, toss it in the back” bike.

In practice, though, nowhere feels too far on it. Spinning back up the hill on Clinton from downtown, and then up the hill on 51st toward Foster can get a little tiring, but it’s not hard to find a gear and go to my happy place until those are over.

And it gets to a nice cruising speed. I overtook some Sunday riders on the Springwater at what felt like an easy pace and one of them muttered “goddamn ebikes” to his buddy as I went by.

Well, whatever it is, it works.

When I think back to 2013—the year 500 miles were enough to win the bike commute challenge at work—I did end up getting an ebike because the 20 mile round trip to work was taking a toll some days. One afternoon someone drafted me all the way from somewhere around Oaks Park to my exit at Lents and said “maybe someday you’ll get a real bike” as she pedaled away. Well, I have one, and still the hate.

Birthday dinner with Ben and his friends

I’ve recently figured out that interactions with a particular human have taken on a curiously stilted, disjointed flavor because they’re using an LLM for coaching on how to navigate situations. Alison says she’s been watching someone’s emails at work become increasingly demanding and vaguely paranoid, and she knows it’s LLM-driven because the “author” screwed up and left a reference to a prompt intact in one email.

For me the tell isn’t em-dashes or particular rhetorical tics, or even extreme changes. A human plainly remains somewhere in the loop. It’s a shift in the tone and what I guess we could call shared agreements or previously understood ground truths.

On that last, I suppose it’s a matter of taking the subjectivity we have to assume about every shared agreement or understanding—the simple wisdom of knowing that nobody ever really sees things exactly as we do—and sensing that the previous delta in understanding is the starting point for an LLM’s stochastic narrative-building and elaboration. A predictable and acceptable standard deviation is stretching to several standard deviations.

There are plenty of stories of people using LLMs to descend into a widening gyre of elaborate and dysfunctional subjectivity. As always, given how mass media works, the stories are lurid and extreme. But they always seem to start with a belief that the LLM managed to crystallize something for the person—seemed to speak to something they suspected but didn’t have language for, or support to believe—and then began to spin out from that initial earned trust into statistically average delusion, and then decidedly abnormal madness, where an NYT or Guardian reporter eagerly awaits.

I don’t think I’m watching someone go mad. I think I’m watching someone set aside their native competence and fundamental epistemic agency in favor of a defective cognitive prosthetic that has no mechanism or feature to mediate its own oscillations: An understanding goes in, the delta in understanding is part of a prompt, the feedback pulls further in some random direction, the delta in understanding widens, the next prompt is even further adrift, the oscillations widen.

But like I said, nobody’s going mad. I’m not concerned for anyone’s safety. I’m just concerned about a relationship, and contemplating my own responsibility in the matter, because I can’t help but wonder if more care with clarity or more patience on my part would have made an LLM a less attractive problem-solving partner.

I would prefer someone trying to find their way to understanding imperfectly but authentically to this.

I’m not sure what to do.

“I Still Prefer MCP over Skills”

Me, too. I think I get why skills have momentum, and among whom they have it, but MCPs provide a kind of ubiquity across UI’s that skills can’t touch.

Union Station

Headed for Eugene to see Ben

RSS Topic Fatigue

#I subscribe to a lot of RSS feeds that are very sensitive to trends: The “popular” feeds from RiL services, the Hacker News front page, lobste.rs, and a few Google News topical feeds. I like the diversity of topics I get from it, but it’s also very susceptible to things coming through in waves. The past few days it has been the Mythos model, a spurt of Gemini features, Iran Iran Iran, reaction and counter-reaction, and counter-counter-reaction to a few high-profile articles about tech.

The triage UI lets me train the triage service on topics, but when I designed it I designed it less as a fine control and more as a rolling, broad collection of signals. I do have a feature that lets me block authors, but I don’t do it the way I think a lot of people would, to get rid of people I don’t agree with but rather because some reporters cover some things I never want to read about, and it’s a way to just whack out big tranches of material once I can see that the author covers a beat I don’t care about. Generally, though, the system is slowly and gently learning from what gets skipped, or very broadly named.

So when a big topical swell comes through, I haven’t had a way to deal with it. I don’t want to downscore the topic du jour because I don’t want to drive it from sight forever. I just want, after seeing the 10th article about it go by in the triage tool, to make it go away for a bit.

So this evening I built that feature in the form of a topic snooze:

I’ve got a Haiku agent doing the scoring on every article that passes into the system anyhow. It’s consulting my existing preferences and attention patterns and providing a little summary about why it’s scoring each article the way it does. So now I’m also using that inference pass to extract three or four likely topics from each article during scoring. The prompt is a pretty simple “figure out three things this could be about at a middling level of specificity.”

Those topics go into a table that records the topic and has columns for when it was first seen, when it was last seen, and how often it has been seen. While the topic is “live,” the system is just tallying and exposing the topic in the feedback UI for each article. If I triage an article, the UI shows me the inferred topics so I can choose to snooze them. If a topic gets snoozed, any articles it’s attached to that haven’t been selected for reading later get triaged out on the spot. For the duration of the topic’s “aliveness” – its rolling daily appearance average is above some threshold per day – articles about that topic get skipped without impacting the overall interest score for that topic. Once the topic slides below the “aliveness” threshold, articles matching it are allowed back into the queue.

I think it will be useful, and I made sure to include UI that lets me tune the params for defining snooze duration and the aliveness state for a topic. The nice thing about a tool like this is that it’s an opportunity to contemplate what harm would be done by not seeing an article I would have otherwise if I get something wrong with this, which is pretty much “none,” and that is a really good thing to remember.

It is also a variation on a theme that continues to emerge and evolve during this current period of vibecoding assorted tools and doing more to work inference into them than I was a year ago:

Even though the stakes are pretty low if the system gets it wrong, I always want my observation port and my knobs:

Inference is great in the spaces, doing things that I’d just consume a dependency to get (e.g. topic extraction) or understanding roughly – well enough – what my assorted likes and dislikes add up to for a given article. But I still want to be in the loop. Human in the loop doesn’t always have to mean “human hovering over the lever,” it can just mean “human nudging the flow this way and that.”

I want the system to gradually attain more and more autonomy as I stick to using it. I actually like it well enough now that I don’t even use RSS readers: I do triage in the mobile or desktop UI, and I use a RiL service to read what comes out the other side. Over the course of the day, about 8 percent of what comes through the system gets flagged for reading, and about 35 percent is auto-skipped before I have to triage it, either due to a deterministic rule (an author) or inference scoring.

When I pull the stats, I see that I’ve only overridden 2.3 percent of the inference system’s decisions. If I doubled the “kill with no review” threshold, I would end up not even seeing 40% of the total volume of content in feeds I’m subscribed to. I had Claude pull the numbers and did a quick sanity check with items I starred for later reading within that threshold, and it looks like I’d probably have 1.9% “regrettable autodeletes” if I just let the system become that much more aggressive.

I don’t have numbers on how much time I spend quickly reviewing kill proposals right now, but having 40% fewer things to even consider in exchange for maybe missing a good article or two a day seems like a decent tradeoff.

09-23-25-26-29-33-35-39-46 ... 58

#12 years ago the Heartbleed vulnerability was disclosed and I started my birthday with an emergency meeting of engineering staff. I had just started leading the tech writing team at Puppet. While Puppet itself was fine, we knew that customers were probably running it on platforms that weren’t fine, and everyone agreed that we needed to help our users, so we did it with documentation about how to patch it.

I couldn’t contribute anything to the docs themselves: We had two great writers on that, so I just got on with my day. The docs got written, and we staged them for publication, but couldn’t publish until nine or ten that evening. So after a day of feeling a little useless for not being able to help with the docs, I realized there was somewhere I could help: I could send the team home and wait around until we got the word it was okay to release our docs and the accompanying blog post.

So I called Al and told her I’d be late, got dinner downtown, then lurked around the office in the Pearl District waiting for the word so I could pop open a shell and run the deployment.

Someone on the marketing team who was waiting around to ship the blog post stopped by and asked, “isn’t it your birthday?” Yeah, I said, but we had these docs to push and the team had done all the hard work, so I figured I’d wrap it up so they could get on with their evenings.

“That sucks,” she said. “Sorry.”

“Well,” I said, “I don’t know how many users we have but I know it’s a lot. And these docs are going to help them a lot. So it’s hard to complain: A birthday spent helping all those people seems like a good birthday present.”

“Fair enough,” she said, and we sat in companionable silence until we could click our respective “post” or “deploy” buttons and head home.

I got home pretty late that night. Al had gone to sleep, and there was a cupcake on the counter with a candle sticking out of it, and that was it for the birthday where I noted that I could officially round my age up to 50.

Some birthdays I work, some birthdays I don’t. I spent my 25th birthday in Basic Training. My 26th I was in a retransmission station in Korea. My 9th birthday didn’t officially happen because it was suspended over an infraction that caused my mother to believe I should spend the day contemplating what it would be like to not be alive to have birthdays. There may have still been a cake, but the three months leading up to it were spent contemplating the void, which is probably where posts like the one I wrote three days before Heartbleed Day come from.

This birthday I worked, and it was a pretty good day: I had my weekly 1:1 with my favorite work person, I had a quiet conversation with someone on my team where we reaffirmed the best parts of our connection, and the last meeting of the day was spent with a colleague in Australia, figuring out how to help her do a thing she’s trying to do. Nothing that moves any cosmic needle or dents the universe. Just another day.

Today I re-read that birthday post I wrote three days before Heartbleed Day, as I do every year, always wondering if the math is going to come out different for me this time, or if the answer will change, and it has not:

Mostly I think we’re born in a house that’s on fire, and there’ll be a moment between flame and ash.

We’ll need to have been kind.

DIY/Self-hosted RSS triage, RiL, and bookmarks

#Wallabag seems to run a lot better than it used to the last time I self-hosted it. Linkding is as good as it was the last time. So I’ve got a full self-hosted reading stack:

- The RSS triager

- Lets me set good/bad topics

- Lets me block, downweight, or upweight authors

- Lets me block, downweight, or upweight topics

- Learns from my “starred” links

- Uses weighting and scoring to filter feeds and and serve them via the Fever API

- Automatically passes high-scoring articles through to my RiL service

- Wallabag for RiL (Replaces any/all of Readwise, Pocket, Instapaper)

- Starred in Wallabag passes the link through to the permanent link saving service

- Decent web app, iPhone app, and looking forward to trying out its Android app on the Boox

- Linkding for my long-term links archive

- Replaces pinboard.in

- Has a handy search injection sidebar – see what you’ve already bookmarked alongside new search results

- Understands the classic bookmarks.html format

- High quality iOS app

The triager is mine because nothing does quite what I want there. feedly tries to, but why pay for something less reliable and less tailored than what I could make for myself?

The RiL service is fungible. I considered going back to Instapaper or just hanging on to Readwise, but I don’t need to. The bookmarking service is fungible, too. I’ve just had good luck with Linkding. Both come with nice mobile apps and a number of extensions, etc. that I don’t have to make for myself.

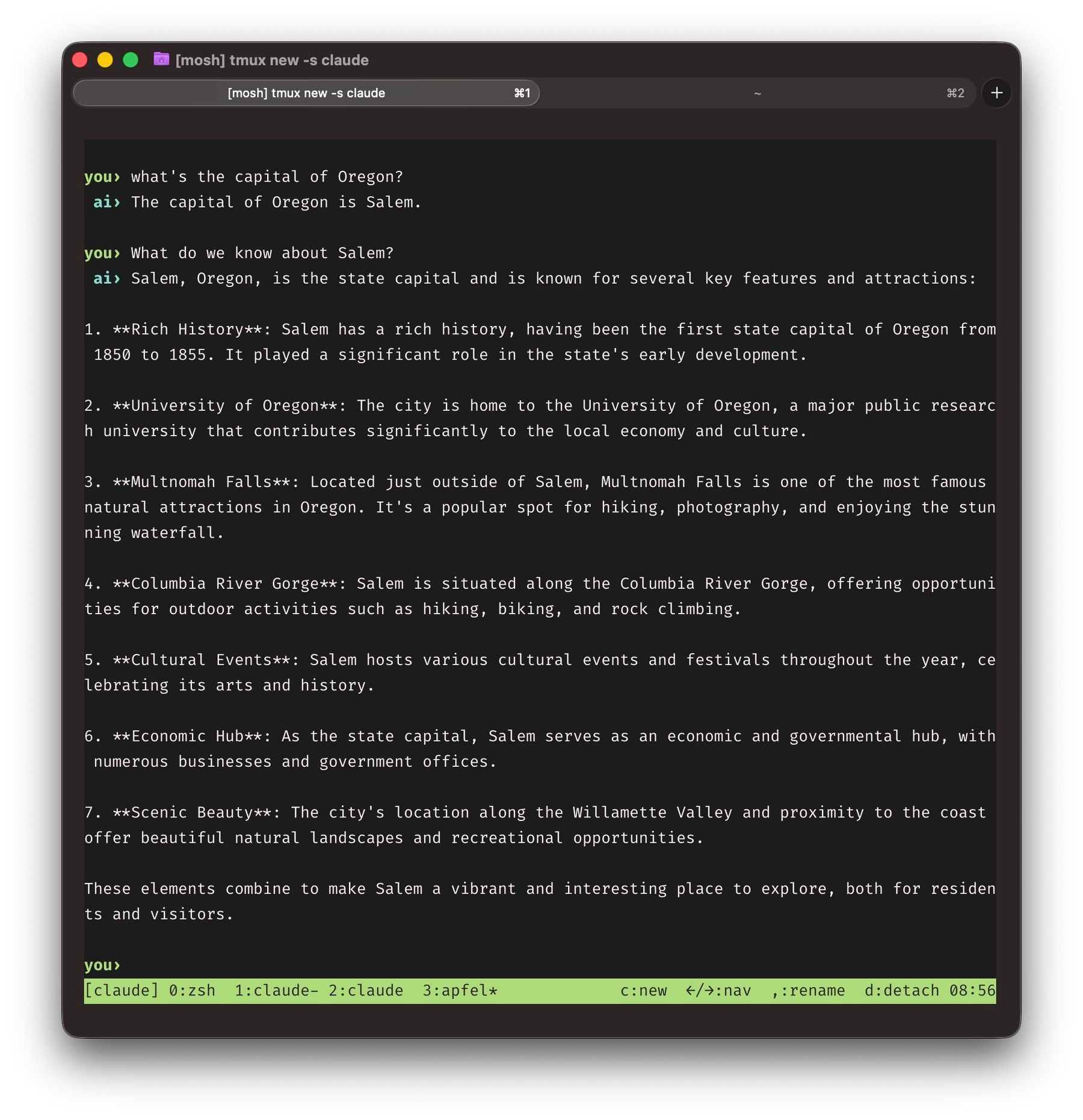

apfel gives you an interface to the local LLM Apple ships on macOS. It has a small context window and is interesting perhaps for the way you could use it to wire inference into a shell script. It’s right about some things. I hope nobody from Planet Apple plans a roadtrip to Oregon with it.

I guess I reinvented feedly

#I was sitting around over coffee this morning thinking to myself, “the current regime of blocking authors as a proxy for blocking the topics they cover is fine and all, but I don’t know if I want to be locked into Readwise forever. What’d be nice would be a way to permanently cleanse the feeds, and also fine-tune that cleansing as sites change, authors come and go, etc.

So today I sort of flipped the model from triaging incoming articles, taking an incremental approach to learning my preferences, and pattern-matching on authors to reviewing feeds and pattern-matching alternately on authors, URL characteristics, and a bit of inference around topics:

- Visit a feed I’m tracking

- List the articles

- Click an article with the option to block author, URL pattern, or click “I don’t like this”

- If I click “I don’t like this,” I can click “content type” or “topic,” or fill in a field. Then Haiku takes a pass and proposes some heuristics it can apply to articles in the feed.

That’s just a variation on what I’ve been doing anyhow, but now the tool republishes a private version of the feed that is dynamically filtered on the rules and feedback I’ve provided so I can subscribe to it from any regular old RSS reader or service instead of being locked in to Feedly, Readwise, Inkwell, etc. In Inkwell, the tool can even swap in the cleaned up feed automatically, cutting down on how much triage even has to happen.

Impeccable & Superpowers

#Worth pausing to note how much better Claude Code/Opus 4.6 are than wherever they were at about a year ago. The biggest improvements, given my earliest complaints, are around memory and long-term context preservation: Fewer repeat mistakes, fewer bananas derails.

I’ve also added a couple of tools that have made the experience much better:

Superpowers is a development workflow that adds a set of skills including:

- brainstorming

- writing-plans

- subagent-driven-development or executing-plans

- requesting-code-review

The brainstorming and planning phase involve a lot of helpful, clarifying questions. The spec files it produces are helpful. The reviews it runs catch inconsistencies and gaps. It adds a few minutes to work on any given feature, but the rate of “got it in one” has shot through the roof, and taking a moment to read its specs and plans offers a lot of reassurance that there’ll be fewer misalignments.

On the other end of the process, Impeccable is a set of visual design skills that address gaps in typography, layout, color, visual rhythm, and more. The starting point with an Impeccable session is the /critique skill, which leads to a set of recommendations on which of its many skills to use.

A lot of the stuff Claude Code produced for aSystem/aCloud was very “my first Rails app default view” in quality. A little back and forth with Claude cleaned up the worst of it, but Impeccable acts a lot more like a design partner, asking about the app’s “brand image,” and walking through questionnaires that are a little educational on their own. Pages that felt like long slogs through undifferentiated metadata became a lot more readable very quickly.

Adventures in RSS Curation

#I worked more on the RSS triage tool, abstracting both the star/save targets (currently have Inkwell API, pinboard, and Readwise) and the feed sources (currently just Inkwell API and Readwise feed items).

Readwise is a nice addition, because its API tracks your percent completed in an article, and the triage tool is watching for that to go to “100” before it considers an article a good recommendation. Inkwell just had “read/unread” via whatever RSS reader, which sets things to “read” on open (or requires a manual mark-read action). There’s also some interesting signal to be mined from Readwise partial reads, too, so that’s on the list.

Honestly, this could just be called “The Oregonianator,” because most feeds I bother with aren’t so godawful on signal-to-noise. I might skip things because they’re not my cup of tea on another feed, but the Oregonian’s catch-all RSS seems designed to get people to swear off RSS: So much filler, junk, clickbait, and syndicated content. When I wrote the outgoing EiC last year she offered a half-hearted defense of it all that sounded more like a hostage statement, vaguely alluding to the idea that the O’s web operation isn’t beholden to the editorial side. It feels like it’s all premised on a 15-year-old conception of SEO that requires high-churn content. It feels familiar to me because I had to do it for a while.

Architecturally, I could reduce this to a worker on a cron job that passes each article through user rules, then passes the survivors through to beefed up inference. Unlike RSS feeds, which have no state, Readwise would support this approach because things it finds in RSS become articles that can be removed from your queue. The other idea I considered was creating and serving a shadow Oregonian feed that’s been through triage.

For now, though, after a few days of training, I’ve pivoted the tool’s primary interface to a review system: There’s enough training data that the tool’s 0.1 - 1.0 confidence scale is pretty good at south of 0.15 and north of 0.7. So I let the app automatically remove articles at 0.15 or lower, and automatically promote 0.7 or higher. I’ll keep on training and see what I can do to narrow the band. There’s also a queue where I can fish a mistaken deletion out within 24 hours. The traditional triage queue remains, as well, for things that fall in between those two scores.

It makes me want to add more sources, because I’ve got a better way to triage them.

“Folk are getting dangerously attached to AI that always tells them they’re right”

I don’t see this as particularly distinguishable from a large volume of social media interactions generally. Maybe we’ve achieved AGI after all.

www.theregister.com/2026/03/2…

!["Overall, deployed LLMs overwhelmingly affirm user actions, even against human consensus or in harmful contexts," the team found.&10;&10;As for how AI sycophancy affects humans, the team had a considerable sample size of 2,405 people who both roleplayed scenarios and shared personal instances where a potentially harmful decision could have been made. AI influenced participant judgments across three different experiments, they found.&10;&10;"Participants exposed to sycophantic responses judged themselves more 'in the right,'" the team said. "They were [also] less willing to take reparative actions like apologizing, taking initiative to improve the situation, or changing some aspect of their own behavior."](https://cdn.uploads.micro.blog/275515/2026/6bd0078b8d.jpg)

Generational talent.

Using my RSS triage tool, I’ve almost completely isolated the most obnoxious parts of [The O][https://oregonlive.com]. They use stringers/syndicators for their thinnest content. Gives me an idea to add an author lookup to check the tool’s memory for associated articles and just permablock them.

I’ve been using macOS screen sharing between MacBook and mini and I’m a little surprised at how responsive it is. No more running upstairs when a CLI process opens a browser for oauth grants or whatever. In fullscreen and dynamic scaling I sometimes forget I’m in Screen Sharing.

A while back I added some instructions to Claude to push back when I have a big idea that is not something I’d call one of my purposes. It took some back and forth to get the right level of pushback, but I appreciate past me for thinking of it.