Capturing aspirations and beliefs in Markdown with anote

#This morning I turned denote-tasks into atask (for “agent task”). Now I’m moving onto anote, which is still a bit fuzzy in my head, but beginning to take shape:

I’m not really interested in the “order me a pizza,” or “make sure I always have deodorant” end states. And I don’t like the landscape any agentic workflow for a consumer has to operate in. I tossed off a quip about how browser automation to do agentic workflows is turning me into a Maoist not because “I hate capitalist pizza places” or whatever, but because I remember the way I felt after finally torturing AppleScript into doing UI scripting for some Mac app that its slob of a developer couldn’t bother to build an AppleScript library for: It was plainly just the very worst thing in the world. There was nothing human readable about it at all. There was nothing I’d ever be able to turn my back on at all. Brittle and opaque. I was in that stage of my automation journey where I was usually blaming myself for failed experiments, but this was a rare case, for the time, of feeling completely cheated by the tools available.

We’re cheated by the tools today. All these silos. You can make agentic workflows to do things, but the underlying infra sucks for it, and a lot of the things people want to do are forbidden by the silos commercial imperatives create. Want to automate a simple flow for a service you hold an account on? Probably a ToS violation even if you’re paying for a premium tier of some kind. And probably a security nightmare.

So for now, whatever. I didn’t create the problem, I don’t want to think about the problem. I think browser automation is a bad idea. It’s inelegant and wasteful. Rube Goldberg shit. And just try using it with some everyday sites with their either inept or automation-hostile tire fire markup. Just, no thanks. And as a digression, all the hooting and whingeing of the tech press aside, I’m kinda +1 on Apple’s “slowness” to improve Siri with LLMs, because Apple’s fundamental neuroses are on full display, but in a way that is probably better for me as a user. Siri sucks, but we’re in real “frying pan/fire” territory with generative AI. Good for Apple. Take your time.

Anyhow, not worrying about how to order pizzas or deodorant, and not primarily a software developer, so what I want to do with agents is more around persistence, executive function augmentation, connection-making, and pattern-matching.

Oh, also really put off by the way I’m seeing marketing people on LinkedIn doing the whole “it’s a feature not a bug” thing with LLM randomness, intoning that these are “stochastic sytems” that demand a new kind of leadership to avoid getting left behind. I am so glad that my content marketing days, however brief, were spent marketing something helpful and good.

My local rig is running LocalGPT. My primary uses for it involve planning my day/week, capturing ideas, wrangling tasks/projects, and knowing about me. During setup, I fed it a number of corporate personality tests I’ve taken over the years, had it step me through a 20-question “getting to know me” workflow, and gave it a Markdown document with my org chart and a few observational notes on everyone in it.

My initial approach for outside context was pretty exuberant, but I cooled my jets after a day or two because I’d piled too much context in and it was beginning to get weird and scattered.

My initial approach for inside context was to just have a directory of more or less freeform Markdown/YAML. That led to lossiness as the LLM struggled to make sense of the hodgepodge of concrete task/project metadata and human musing. So I woke atask back up to provide more structure: There’s JSON output, better automation for the CLI tool, and a SKILL.md agents can use to understand how to use atask in planning and interaction sessions. My task data remains my own, I don’t have the overhead of an MCP, the sandboxed agent just gets access to what it needs, and it’s pretty fast.

So to anote for the things that aren’t tasks … they’re just ideas and relationships. Same underlying data format: Denote-style filenaming, Markdown/YAML, affordances for humans and machines, but with an ideation/incubation/continuous improvement workflow where the entry point is “hey I had this idea,” the exit point is “I ended up here with this idea and rejected it,” or “I ended up here with this idea and accepted it, implemented it, etc.” The SKILL is there to guide the agent into making sense of, working with, and accounting for that corpus of ideas and understandings without the current approach the assorted -claws take of a flat Markdown file.

As I’ve sat with Claude this morning, I’m up to “working CLI that can capture ideas into Markdown files,” and there are a couple of taxonomies rattling around: Everything is an “idea.” Some ideas are “aspirations,” — things you want to do, make or build. Some ideas are “beliefs,” things you consider and either accept or reject.

The conceptual glue lives in the SKILL, where the agent is prompted to work with these things in distinct fashions depending on their stage:

| Stage | Aspiration | Belief |

|---|---|---|

| seed | seed | seed |

| draft | draft | draft |

| engaged | active | considering |

| rethinking | iterating | reconsidering |

| arrived | implemented | accepted |

| shelved | archived | archived |

| no | rejected | rejected |

| fizzled | dropped | dropped |

The thing I’m trying to deal with is that I’ve increasingly found LocalGPT useful for kicking ideas around (I fire up wisprflow and free associate and it chatters back at me using that loose collection of things it knows about me) but sometimes it is just … Jesus Christ. Like, “sorry, that dog was distracting me with its barking” leads to “do you want me to find you contact information for animal control,” or worse.

It took some random work noodling and wanted me to go to HR by the time it was done. It took some additional, unstructured prompting to get it to memorize that senior directors don’t go to HR for that kind of thing, and that the problem I am trying to solve is entirely within me to solve. Now that it “knows” that, it’s a more helpful mirror because it doesn’t suggest stuff I don’t think is okay to begin with, and because I can see it making connections with the existing corpus (e.g. those personality profiles) before proposing ideas.

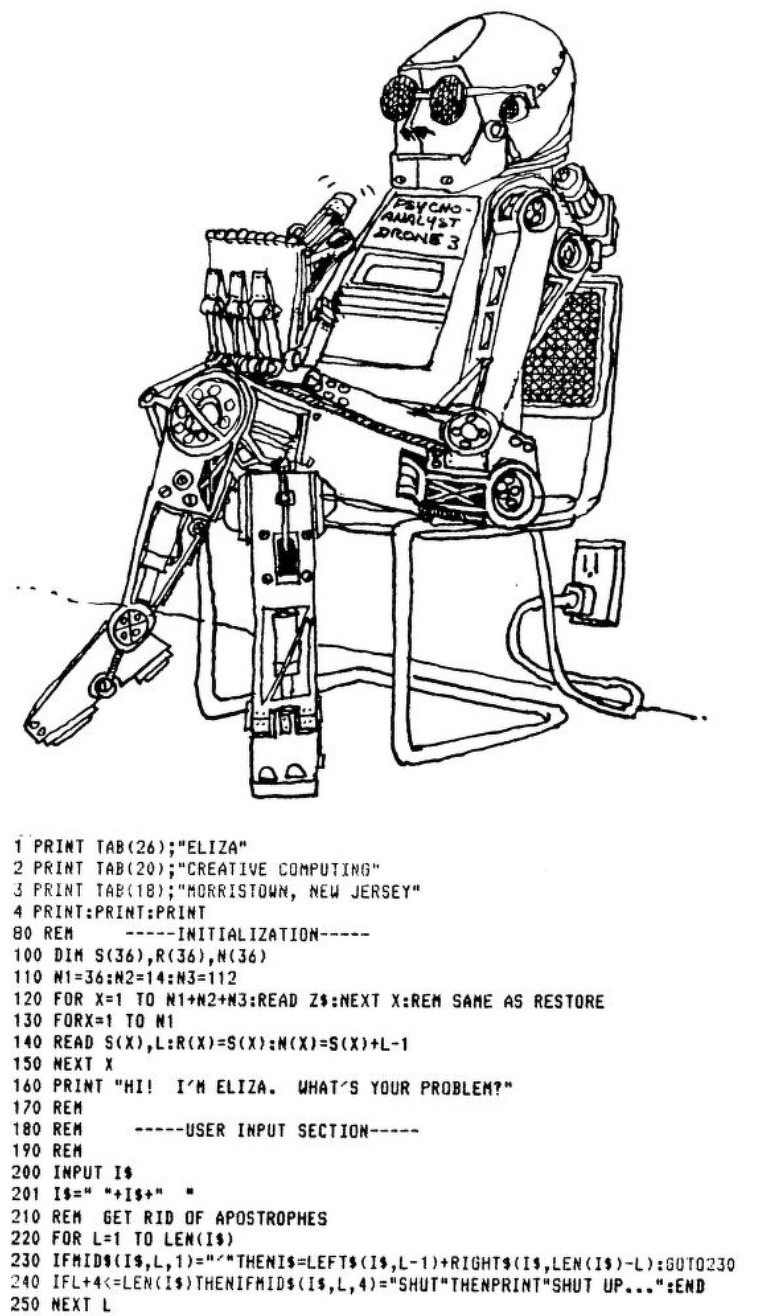

(I am wondering if there’s an idea to explore that for every technology person of a certain age, there is a foundational or formative BASIC program. Given how many hours I lost to Civilization and Sim City over the years, it makes sense that “Hamurabi” was a fave. And for this line of experimentation right now, it makes sense that I was also deeply intrigued by—then immediately irritated by—“ELIZA.” )

“You know, Mike, you could do this with other humans.”

But I kind of don’t want to. As much as I like to be in collaborative settings and interact with people, there is a part of me that is probably more intensely private than many, and right now at work I am very much in “go it alone” mode for assorted reasons. It is helpful to have a big, connected mesh of tasks, projects, and ideas to ideate with. I am after persistence for things I care about as a human being who does things besides write code.

The SKILL.md is plenty useful for understanding what it is about so far.